Adoption Asymmetry

Stray Narratives, Issue 02

In 1930, John Maynard Keynes published a short essay called “Economic Possibilities for Our Grandchildren.” It is, by any measure, one of the more charming documents in the history of economic thought. Writing in the teeth of the Great Depression, Keynes looked a hundred years into the future and concluded that the economic problem, the struggle for subsistence, would by then be solved. Technological progress would make human labour so productive that the average person would need to work only fifteen hours a week. The remaining time would be devoted to leisure, to culture, to the finer things that a civilisation freed from want might choose to pursue.

Keynes was, in one sense, spectacularly right. Productivity has increased roughly fivefold since 1930. The material standard of living in the developed world would be unrecognisable to Keynes’s contemporaries. The refrigerators are larger, the antibiotics are real, and the information once locked behind the doors of the British Library is available to anyone with a telephone and a willingness to squint at a small screen. By any reasonable accounting of output per hour, we passed Keynes’s threshold decades ago.

And yet. The working week has not shrunk to fifteen hours. In much of the professional economy, it has expanded. I can speak to this from personal experience: in thirty years of private banking, I cannot recall a single colleague who reported working fewer hours than the year before. The proliferation of email, of conference calls, of compliance requirements, of coordinating documents that require the input of fourteen people before they can be sent to fifteen others, has absorbed every productivity gain that technology has delivered and then demanded more. I do not think this is because my colleagues and I are unusually inefficient, though I would not wish to rule it out entirely. I think it is because Keynes made an error, and it is an error that is being repeated today in almost identical form.

The error was categorical. Keynes saw that machines could perform verifiable tasks, the physical and cognitive work that has a measurable output, and concluded that the total volume of work would shrink as machines absorbed it. What he did not foresee, and what I believe the current consensus on artificial intelligence does not foresee, is that when you make verifiable work cheaper, you do not reduce the total quantity of work. You change its composition. The economy does not contract. It reconstitutes. And the new work that appears is overwhelmingly of a kind that machines cannot do: contested, coordinative, dependent on negotiation between agents with different information and competing interests.

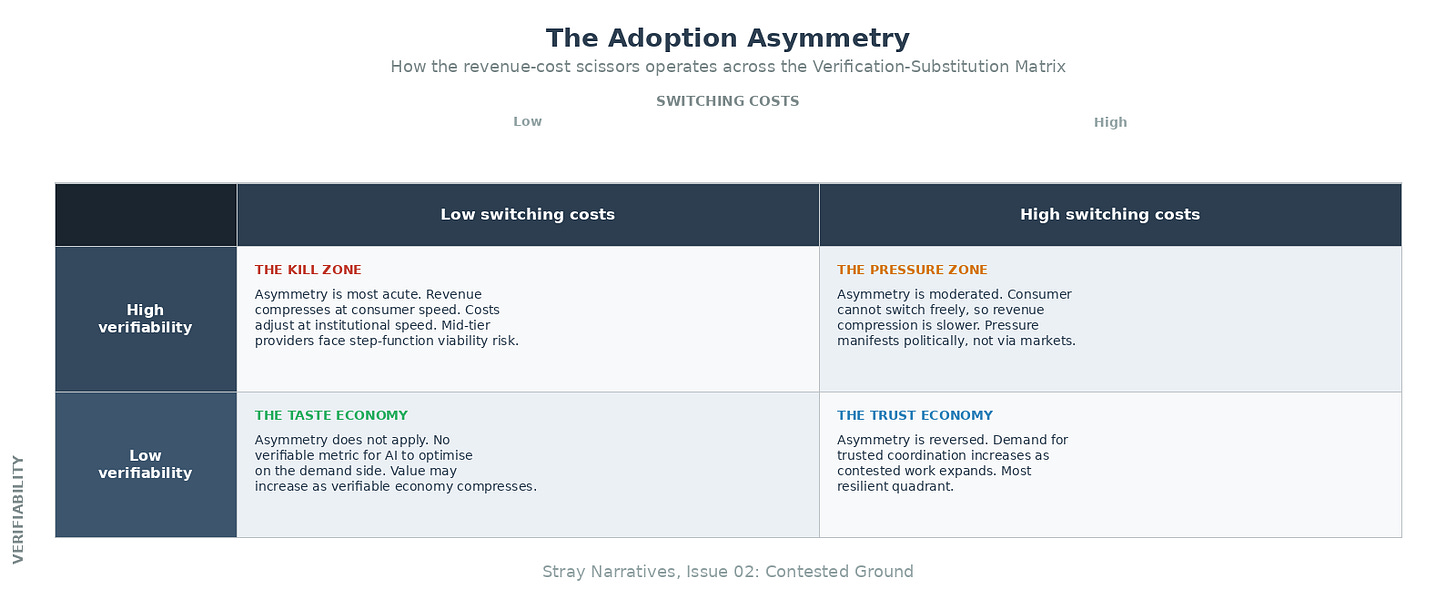

In the previous issue of Stray Narratives, I proposed a framework for understanding AI’s economic impact, built on the distinction between verifiable tasks and contested ones, and on the interplay between verifiability and switching costs that produces four very different market outcomes.[1] I also conducted a thought experiment about what happens when AI is placed in the hands of every consumer as a tireless optimisation engine, a sorting machine that collapses information asymmetry in every market where quality can be objectively measured.

Consider buying a house. The verifiable components of a property transaction are substantial and increasingly automatable. The comparable sales data for the neighbourhood can be retrieved in seconds. The mortgage calculations, once the province of a specialist, are trivially reproducible. The conveyancing process is largely a matter of checking titles, confirming boundaries, and ensuring that the appropriate searches have been conducted. A surveyor’s report follows a standard format and assesses the property against known criteria. An AI system can do all of this faster, more comprehensively, and more accurately than any individual professional.

But the transaction does not end with the verifiable. It barely begins there. The actual purchase of a house is a negotiation between two parties, each of whom possesses information the other does not, conducted through intermediaries who have their own incentives, under time pressure that is rarely symmetrical. The seller needs to close by a specific date for reasons she has not disclosed. The buyer’s surveyor has identified a crack in the wall that may or may not indicate subsidence, and the interpretation of its significance is a matter of professional judgment on which reasonable surveyors disagree. A chain of six transactions is involved, and one party three links down the chain has just had a mortgage offer withdrawn. The estate agent, who has been doing this for twenty years and knows both the neighbourhood and the personalities involved, picks up the telephone and finds a formulation that allows everyone to save face, adjust their expectations slightly, and proceed. There is no algorithm for this. There is no correct answer to be optimised. There are only positions, relationships, and the accumulated judgment that comes from having navigated a thousand such situations before.

This is what Henry Gladwyn, in his essay “Contested Ground,” calls the distinction between verifiable tasks and contested tasks.[2] Gladwyn uses a different example, drawn from the mining industry, but the principle is identical. When professionals sit around a table to negotiate a warranty schedule for a major asset, they are not solving a problem. They are defending positions. Each party has a different view of what the risks are, a different tolerance for exposure, and a different set of commercial pressures. The negotiation does not end with a correct answer. It ends with a settlement that all parties can live with, which is a fundamentally different kind of outcome. Chess ends with a winner. A warranty negotiation ends with a compromise. And the compromise requires something that no AI system currently possesses: the ability to read a room, to judge when a counterparty is bluffing, to know which concession will unlock the deal and which will be seen as weakness.

The key insight, and it is one I believe has not received the attention it deserves, is that every time technology makes verifiable work cheaper, it does not eliminate the contested work that sits alongside it. It makes the contested work a larger share of what remains, and frequently generates entirely new contested tasks that did not previously exist. When both sides of a negotiation have AI agents that can resolve the technical points in minutes, the negotiation does not get shorter. It reaches the contested ground sooner. The bots agree the numbers. The humans argue about what the numbers mean, who bears the risk, and whose institutional reputation is at stake. The question is no longer “what is the answer?” It is “who instructed the bot, and what were they trying to achieve?”

This is Keynes’s error in precise form. He saw the substitution, machines replacing labour in verifiable tasks, and concluded that work would shrink. He missed the reconstitution, the creation of entirely new categories of coordinative, relational, and contested work that appeared to absorb the freed capacity. There is no theoretical limit on the number of contestable tasks humans can dream up. A nation of geniuses will cure diseases and also be deployed on tasks that today seem trivial or absurd.[2] The point is not that this is wasteful. The point is that the economic system generates demand for human judgment in contested domains as fast as technology eliminates demand for human cognition in verifiable ones.

The direction, then, is clear. Contested work expands. But the direction is not the whole story, and I want to be honest with the reader about what makes the story uncomfortable, because it is the discomfort that contains the actual insight.

The question is volume. Not whether contested work expands, which it does, but whether it expands fast enough, in the right places, with the right skill requirements, to absorb the people displaced from verifiable work. And here the arithmetic is less reassuring than the theory.

Consider healthcare administration. The United States employs roughly two million people in billing, coding, claims processing, and insurance administration. A substantial share of this work is verifiable: matching procedure codes to coverage terms, processing claims against policy documents, flagging discrepancies. AI compresses this directly and is already doing so. The contested expansion is real. Every claim that cannot be resolved algorithmically, every coverage dispute, every prior authorisation appeal that involves clinical judgment and patient circumstance, every negotiation between provider and insurer over an ambiguous case, these are contested tasks that require human judgment. But the contested work requires different skills, different training, and different institutional positioning than the routine processing it notionally replaces. And the volume does not match. For every hundred billing clerks displaced, the system may need ten more skilled claims negotiators. The ratio is unfavourable and the retraining timeline is measured in years. The same arithmetic repeats across legal services, financial services middle offices, routine accounting, and standardised professional services: enormous headcounts performing substantially verifiable tasks, with a contested layer that will expand but cannot absorb the displaced volume on anything like a one-for-one basis.

The honest synthesis is this: the direction is right. Contested work does expand. There is no theoretical limit on the contestable tasks the economy can generate. But the practical limit is the transition: the time it takes for displaced workers to retrain, for new institutional structures to form around contested work, for the economy to generate enough demand for human judgment to absorb the supply of people freed from verifiable tasks. The historical precedent is not encouraging on the timeline. The transition from agricultural to industrial employment took decades and involved enormous social dislocation. The transition from manufacturing to services took a generation. The optimists are asking us to believe that this transition will be faster because the economy is more adaptive. Perhaps. But the burden of proof is on them, and I have not yet seen it met.

There is, however, a more specific reason the transition is likely to be painful, and it has nothing to do with whether AI replaces workers directly. It has to do with which side of the business AI hits first. I want to introduce a concept here that I believe the consensus is missing, and I shall call it the Adoption Asymmetry. I am aware that naming one’s own concepts is the kind of thing that sounds better to the author than to the reader, but I ask for the indulgence on the grounds that what follows is, I think, genuinely important.

Recall the demand-side sorting machine from the previous issue. The private consumer faces none of the barriers that slow institutional adoption. There is no compliance department, no procurement cycle, no legacy system. The individual downloads an application and begins using it. The search cost drops to approximately zero. The consumer identifies the best provider and switches, or demands transparency from the incumbent, immediately. This is the demand side, and it operates at what I shall call consumer speed.

Now recall the institutional resilience I described: the grammar of organisational life, the legal liability that attaches to every decision, the regulatory frameworks, the accumulated trust between agents within the institution. AI cannot be deployed autonomously within these structures until the legal frameworks clarify accountability, until compliance departments sign off, until the technology has been tested in human-supervised parallel operation for what is typically twelve to eighteen months. The first phase of institutional AI adoption is additive to headcount, not subtractive: you need the AI system and the human supervisor. Displacement comes only later, once confidence is established, once the institutional muscle memory changes. This is the supply side, and it operates at institutional speed.

The result is what I call the Adoption Asymmetry: a revenue-cost scissors in which the top line compresses at consumer speed while the cost base adjusts at institutional speed. The margin collapses in between.

Let me make this concrete. Return to the insurance example from the previous issue. The consumer’s AI agent has compared every policy in the market. It has read the full policy documents, identified the exclusions buried in clause 14(b), cross-referenced claims satisfaction data with pricing. The best insurer in each category gains share. The second-best insurer loses it. This happens at consumer speed, which is to say it happens as fast as consumers adopt the AI tools, and consumers face none of the institutional barriers that slow corporate adoption.

But the second-best insurer cannot reduce its cost base at the same speed. Its claims processing operation is run on legacy systems embedded in regulatory frameworks that require human sign-off. Its compliance department will not approve autonomous AI workflows until the regulator provides guidance, and the regulator is, charitably, not known for the speed of its deliberations. Its labour agreements constrain the pace of headcount reduction. Its institutional grammar, the processes and protocols through which hundreds of employees coordinate their work, cannot be rewritten on the timescale that the revenue decline demands.

Revenue declines immediately. Costs adjust over years. The margin is where the business dies.

This is the mechanism by which mid-tier businesses in verifiable sectors cross viability thresholds. Not through direct automation of their workforce, which is the mechanism the consensus discusses and which institutional inertia genuinely slows. But through the destruction of their revenue by a consumer who now has perfect information, while their cost base remains locked in institutional time.

And before the business dies, it does what businesses under margin pressure have always done first: it leans on compensation. Hiring freezes arrive before layoffs. Bonus pools shrink before headcounts do. Salary increases are deferred, then cancelled, then replaced by real-terms cuts disguised as flat nominal pay. The scissors compresses wages before it eliminates jobs, which means that the labour market effects of AI may manifest not as the dramatic unemployment the pessimists fear, but as a long, quiet period of stagnant or declining real wages across every sector exposed to the asymmetry. And the suppression propagates beyond the directly affected industries. A mid-level professional in an adjacent sector who might otherwise negotiate a raise is aware that similar roles elsewhere are under pressure. The threat of displacement, even before actual displacement occurs, shifts bargaining power toward the employer. This is the same mechanism that operated during the offshoring wave: the mere possibility of relocation suppressed wages in jobs that were never actually moved. The wage effect arrives first, arrives broadly, and arrives silently.

I want to add two qualifications, because I think they matter and because I do not wish to overstate the case. First, the human-supervision testing period is a genuine brake on the supply side, and it buys real time. But it has a natural expiry. Once a system has been running alongside humans for a year or more and the error rates are demonstrably lower than the human baseline, the economic pressure to remove the supervisor becomes very difficult to resist, particularly if competitors have already done so. The supervision phase buys time. It does not buy a permanent reprieve. Second, the asymmetry is most acute in the Kill Zone, where consumers can compare and switch freely. In the Pressure Zone, where switching costs are high, the demand-side compression is slower and the political dynamics I described in the previous issue dominate instead. The asymmetry is not uniform. But where it applies, it is severe.

The Adoption Asymmetry is, I believe, the mechanism the consensus is missing. The standard bear case says AI replaces workers directly. The standard bull case says institutional friction prevents this. Both are looking at the supply side. The asymmetry shows that the damage arrives from the demand side, on a faster clock, before the supply side has time to adapt. And the damage is not to workers directly. It is to the businesses that employ them, which is a fundamentally different causal pathway with fundamentally different consequences.

I have spent this issue on what I hope is a rigorous examination of two questions. First, what happens to contested work when verifiable work gets cheaper? The answer is that it expands, but not fast enough, and not in the right shape, to absorb the displacement on a comfortable timeline. Second, what mechanism drives the displacement? The answer is not direct automation, which institutional inertia genuinely slows, but a revenue-cost scissors in which demand-side compression, driven by AI-empowered consumers, arrives years ahead of supply-side adjustment.

But there is a further question that the Adoption Asymmetry raises, and I have deliberately left it for the next issue. If the second-best insurer, the mid-tier law firm, the regional financial services provider, is dying in the scissors, why don’t new AI-native competitors simply step in and capture the market? If the incumbents are too slow to adjust their costs, surely a startup built from scratch on AI-native workflows can offer the same service at a fraction of the cost and take the business?

The answer, I shall argue, is that it cannot, for reasons that have nothing to do with technology and everything to do with distribution. And the implications of that answer, for where value migrates when the verifiable half of the economy compresses, are both surprising and, for the investor willing to think carefully, rather consequential. The market is pricing the kilowatt-hours. I intend to follow them, but not in the direction the consensus expects.

The Adoption Asymmetry: A Quick Reference

The demand-side sorting machine (consumers using AI to compare, verify, and switch) operates at consumer speed: no compliance department, no procurement cycle, no legacy architecture. The supply-side response (companies adopting AI to reduce costs) operates at institutional speed: gated by legal liability, regulatory approval, human-supervision testing, labour agreements, and the institutional grammar through which organisations coordinate.

The result is a revenue-cost scissors: top-line compression arrives years ahead of cost-base adjustment. The margin collapses in between. Mid-tier businesses in verifiable sectors cross viability thresholds not through direct workforce automation but through demand-side revenue destruction.

Stray Narratives is published when the market demands a closer look. The next issue will examine why new AI-native competitors cannot resolve the Adoption Asymmetry, and what this means for where value migrates when the verifiable economy compresses.

References

[1] Stray Narratives, Issue 01: “The Sorting Machine.” The frameworks for cognitive vulnerability vs. institutional resilience and the Verification-Substitution Matrix are developed in full in that issue.

[2] Henry Gladwyn, “Contested Ground.” The distinction between verifiable and contested work, the observation that making verifiable work cheaper causes contested work to expand, and the formulation about a “nation of geniuses” are drawn from this essay. Gladwyn’s account of professional work as negotiation in contested space, rather than problem-solving with verifiable outcomes, underpins the analysis presented here.