The Wire is Not the Business

Stray Narratives, Issue 07

Stray Narratives is published when the market demands a closer look. Nothing in this publication constitutes investment advice. All views are those of the author. Please read our full disclaimer.

There is a question worth asking before the quarterly results, before the capex disclosures, before the infrastructure pipeline numbers. It is not whether AI works. It does. It is not whether AI will make businesses more efficient. It will. The question is whether making everyone more efficient at the same time, using the same tools at roughly the same cost, makes anyone more profitable.

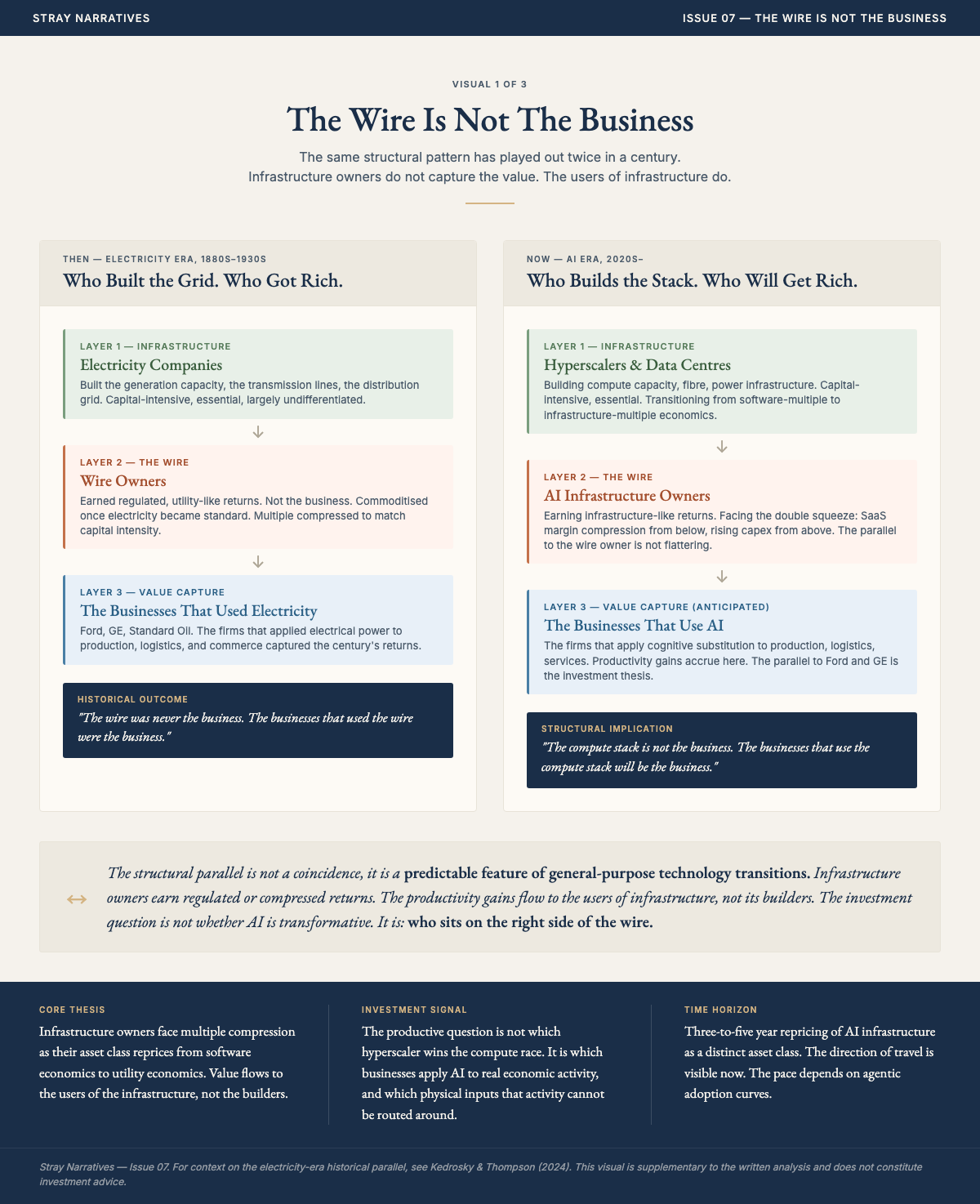

Electricity is the answer. When rural electrification arrived in the early decades of the last century, it transformed productivity across every sector it touched. Farms, factories, shops, offices, all became measurably more efficient. The companies that owned the wires and the generators did not become the dominant businesses of the century. The value flowed to the users of electricity, not its suppliers, because access became universal and the efficiency gains competed away through lower prices and higher output expectations. The wire was not the business. What the wire enabled was not the business either. The business was what you built once reliable power was a given and your competitors had it too.

AI is the new wire. Access to it is becoming a baseline operating condition. The productivity gains it delivers will be real and they will be available, at broadly comparable cost, to every firm in every sector simultaneously. That is not a reason to be pessimistic about AI. It is a reason to be precise about where the value actually goes.

It does not, in the main, go to the companies building the infrastructure.

A brief detour through history

The historian Carlota Perez spent considerable time studying what happens when genuinely transformative technologies arrive. Canals, railways, rural electrification, fibre optics. Her finding, somewhat inconvenient for anyone hoping for a clean story, is that bubbles and golden ages tend to arrive in sequence rather than as alternatives. The bubble is not the opposite of the revolution. It is, rather annoyingly, the precondition for it.

The railroad bubble of the 1870s wiped out a remarkable number of companies and banks[1]. It also settled the American West and accounted for sixty-two percent of total US market capitalisation by 1900. Both things were true simultaneously. Arguing that AI is a bubble is not the same as arguing AI does not matter. It is simply arguing that the path from here to the golden age runs through some territory the current valuation of the sector has not fully priced.

What the market has not fully priced is not the technology. It is the transformation of the companies building it.

What the hyperscalers are becoming

Imagine a toll road. The owner built it, owns it, and collects tolls from everyone who uses it. The economics are straightforward and rather pleasant. The marginal cost of one more car is close to zero. As traffic grows, margins expand. This is what the large technology platforms have been for the past twenty years. Asset-light, high-margin, and protected by the simple fact that everyone needed to use the road.

Now imagine the government tells the toll road owner that it must also build the next hundred miles of highway, fund the electricity grid that powers the tunnel lighting, sign fifteen-year fixed-price contracts for construction materials, and accept a regulated return on the new sections. The original toll revenue is still coming in. But the company is now simultaneously a construction firm, a utility, and a regulated infrastructure operator. Its balance sheet looks different. Its risk profile looks different. And a sensible investor, asked to value it, would reach for a rather different multiple.

This is what is happening to the hyperscalers. The five largest technology companies by capital expenditure are forecast to spend the equivalent of more than two percent of US GDP this year. To put that in context: it exceeds the peak railroad buildout of the nineteenth century, the shale energy boom, and the fibre optic spending of the dotcom era. Historic is now the correct word.

The reason only the largest incumbents can build this infrastructure is not mysterious. The bottleneck has shifted from acquiring chips to securing land, substations, transformer capacity, and the utility relationships that allow a data centre to move from contracted power, which is merely a promise, to connected power, which means actual electrons flowing into actual buildings. When challenger capacity is cancelled, as nearly half of planned US data centre builds this year are expected to be delayed or cancelled, it is the smaller operators who fall away first. The incumbents retain pricing power precisely because physical execution is so hard to replicate.

So only they can build it. And building it is changing what they are.

The technology platforms have historically derived their monopoly power from three sources: economies of scale, network effects, and proprietary technology. AI is eroding all three simultaneously. Economies of scale are undermined because AI lowers the fixed cost of software development for everyone, including the hyperscalers’ own customers. The Catholic Church initially welcomed the printing press as a useful tool for spreading its message. The printing press had other ideas. Network effects are threatened by AI agents that sit between the user and the platform, potentially reducing what is now a coveted destination to a repository of content. Proprietary technology is undermined by the degree to which the underlying AI research has remained open source.

The result is a double movement. Software margins compressing from below, as the intelligence commodity deflates and barriers to entry fall. Infrastructure costs accumulating from above, as the physical build-out obligation grows. The balance sheet in the middle absorbing pressure from both directions at once.

The market has begun to notice. Two years ago, every dollar of AI capital expenditure announced was rewarded with two dollars of additional market capitalisation. A year ago, the ratio had fallen to one for one. It has since reversed. Forward multiples across the hyperscaler class have been compressing, the market beginning to price these businesses not as asset-light software platforms but as capital-intensive infrastructure operators. It is not saying they will fail. It is adjusting the multiple to reflect what they are becoming.

Not all hyperscalers sit in the same position. The class looks uniform from a distance but three structural variables separate the well-positioned from the vulnerable: custom silicon, cloud revenue, and edge inference capability. The companies that combine all three occupy a fundamentally different economic position from those that have one or none.

Custom silicon is the most widely discussed. Google has developed custom tensor processing units at a scale that makes it substantially independent of external chip supply. Amazon’s custom chip programme, covering its Graviton processors, Trainium AI chips, and Nitro networking infrastructure, has reached a comparable position. Both companies are avoiding what might be called the Nvidia margin tax: the substantial gross margins that a company dependent on external silicon pays to its chip supplier before it earns anything from its own customers. The custom silicon route is not without risk, but it restructures the cost base in a way that external dependence cannot.

Cloud revenue is the variable the market has not fully separated from the capex story. Amazon, Microsoft, and Google operate cloud platforms that generate external revenue from the same infrastructure they are building. Every dollar of data centre capex serves both their internal AI workloads and their paying cloud customers. The infrastructure is a cost centre and a revenue centre simultaneously. Meta and Apple do not have cloud businesses. Their infrastructure spending is pure cost against advertising revenue or hardware margins, with no external customer base to amortise the investment. The distinction matters because it determines whether the capex obligation is self-funding or parasitic on existing margins. A company building infrastructure that other companies pay to use is in a structurally different position from one building infrastructure that only serves its own products.

Edge inference is the variable that almost no one is pricing correctly, and it cuts both ways. If AI processing migrates from centralised data centres to devices, running on the neural processing units now embedded in every flagship smartphone and laptop, it reduces long-term demand for centralised compute. Apple is better positioned for this shift than any other company in the class. Its on-device inference capability, built on years of custom silicon for iPhones and Macs, means it can deliver AI features without routing every query through a data centre. Google and Qualcomm are investing heavily in the same direction. The implication for the infrastructure thesis is not that edge AI kills it, but that it shortens the constraint window. Training and heavy agentic workloads remain centralised for the foreseeable future, but the assumption that inference demand scales linearly into data centres deserves more scrutiny than it is receiving. Edge erosion is a risk to the duration of the physical constraint, not to its existence.

The combination is what matters. Google and Amazon have custom silicon, cloud revenue, and growing edge capability. They sit in the strongest structural position. Microsoft has cloud revenue and corporate integration but depends on external silicon, a vulnerability it is addressing but has not yet resolved. Meta has custom silicon ambitions but no cloud business and no meaningful edge presence, making its infrastructure spend the most concentrated bet in the class. Apple has the strongest edge AI position and the most advanced device silicon but no cloud business, which means it captures the inference shift without participating in the infrastructure buildout at all.

The position of Microsoft deserves specific attention because it is the most complicated case. It is the most deeply embedded of any technology company in corporate operational infrastructure. Office 365, Teams, and Azure are not easily substituted, and that integration gives it a genuine platform durability that the infrastructure cost argument does not simply override. Some of the multiple compression across the sector reflects the broader re-rating of software businesses rather than a specific infrastructure judgement. A company with Microsoft’s level of corporate penetration may prove more resilient in the AI transition than a clean reading of the electricity parallel would suggest.

Four points, stated plainly. First, the hyperscalers are being repriced from software to infrastructure economics. This is a direction, not a destination, and the transition is incomplete. Second, within the class, the companies best positioned to absorb the double squeeze are those that combine custom silicon, cloud revenue, and edge inference capability. Third, cloud revenue is the underappreciated buffer: it turns infrastructure capex from a pure cost into a revenue-generating asset, and the market has not yet fully differentiated between hyperscalers that have this buffer and those that do not. Fourth, edge AI compresses the duration of the centralised infrastructure constraint, which matters for how long the physical bottleneck thesis remains operative, and for which companies emerge strongest on the other side of the buildout.

The honest counterargument

There is a scenario in which compute scarcity itself becomes a source of pricing power. If the physical bottleneck proves as durable as the evidence suggests, and if only the incumbents have the execution depth, then the survivors may find their earnings revised upward even as their multiples compress toward utility economics. Inference prices across the major platforms have been edging upward rather than downward in recent months. Token credits are being rationed. API pricing is being revised. The consensus has not yet noticed.

The mechanism is traceable. Providers absorbed cost gaps through 2025 to win market share. High-end compute costs then surged. Agentic workflows arrived, consuming tokens at multiples of traditional chat interfaces, and the cost buffers that cloud providers had been willing to carry ran out. The compute repricing is not a projection. It is already visible in how the major platforms are pricing their inference products.

The difficulty with dismissing this counterargument is that it does not operate on the same time horizon as the electricity parallel, and conflating the two produces a false resolution. The infrastructure repricing thesis, compute becomes structurally more expensive, the physical asset layer captures a larger share of AI profits, operates on a three-to-five year horizon. The electricity parallel, intelligence eventually commoditises, value flows to users, operates on a fifteen-to-twenty year horizon. Both can be true simultaneously. This thesis does not require the short-run infrastructure repricing to be wrong. It requires only that the transition period eventually ends, which it will. The honest acknowledgement is that for investors operating on a three-to-five year horizon, the infrastructure pricing power thesis may be the more operationally relevant frame, even for those who accept the long-run electricity parallel.

The most plausible version of the longer story is that Chinese efficiency work ultimately shortens the window. Chinese laboratories have demonstrated that inference costs can be reduced by an order of magnitude through algorithmic efficiency rather than raw compute. Where Western firms have responded to capability demands by building larger data centres, Chinese competitors have responded by making the models themselves more efficient, achieving comparable results at a fraction of the energy and capital cost. The Chinese efficiency argument is real and should not be dismissed. What it does not resolve is the near-term physical constraint: the agentic workloads driving current infrastructure demand are not yet efficiently substitutable, and the disclosed US data centre pipeline of over two hundred gigawatts[3], against active development capacity that can deliver only a fraction of that in any given year, ensures the physical squeeze persists for years rather than quarters. The efficiency gains compress the duration of the constraint. They do not eliminate it.

This returns us to the electricity parallel. The early electricity companies enjoyed pricing power during the period when generation capacity was scarce. That pricing power eroded as access became universal. The question is not whether AI intelligence will eventually become a cheap commodity. It will. The question is how long the transition period lasts, and who captures the rents during it.

What the bull case gets wrong

There is a number that has been doing considerable damage to clear thinking about AI revenue. It is the growth rate of coding agent usage since late 2025. When autonomous coding tools arrived, their adoption was rapid and their token consumption was extraordinary. Analysts, confronted with a line on a chart going in a direction lines rarely go, did what analysts tend to do. They extrapolated it.

The extrapolation rests on a confusion between two very different kinds of work. The distinction between them matters.

The first is the nature of the work itself. Coding is deterministic and expansive. The code either runs or it does not. Writing it generates more code, test routines, build routines, debugging cycles, each consuming additional tokens. Agentic systems, autonomous sequences of tasks run continuously without human intervention between steps, maintaining state across hours or days of operation, are similarly expansive, consuming energy at a multiple of a standard query. The compression argument that follows applies to the remainder of knowledge work, not to these categories. Most white-collar work is neither deterministic nor expansive. It is compressive and probabilistic. You have a large report. You want the key points. The AI takes many tokens in and produces few out. There is no expanding chain of output. The task is done and the token consumption ends.

The second problem is more fundamental. A significant portion of knowledge work does not create genuine economic value. It exists because the world is opaque, fragmented, and difficult to cross without a guide. Intermediaries who exist because buyers and sellers cannot find each other efficiently. Compliance layers that exist because processes are too complex to follow without interpretation. Reconciliation work that exists because systems do not communicate. Advisory functions that exist because complexity makes self-service impossible. When AI compresses that complexity, this work does not get automated. It gets eliminated. The job ceases to exist not because a machine does it more efficiently, but because the problem it solved ceases to exist. The total addressable market for AI-assisted knowledge work is therefore smaller than the projections assume, because part of what those projections counted as economic activity was friction dressed as value. AI is not replacing it. It is revealing it.

This is where the electricity parallel bites again. Electrification made every business more productive. It did not cause every business to consume electricity in proportion to its productivity gains. The assumption that AI token consumption scales linearly with white-collar adoption is the equivalent of assuming that every electrified farm would consume power in proportion to the acreage it tilled. They did not. Neither will offices.

There is a further confusion embedded in the productivity data. Measured US productivity has been rising at rates not seen in a decade. The immediate reaction has been to attribute this to AI. It is arithmetic. When investment rises sharply and hours worked remain constant, measured productivity rises as a matter of definition. The capital expenditure on AI data centres is lifting the investment component of GDP. That is a statistical artefact, not a productive miracle. The market has been reading the artefact as confirmation of the demand thesis. It is not.

The physical wall

The US electrical grid handles roughly fourteen percent of the nation’s total energy flow[2]. The remaining eighty-six percent moves through liquid fuels, natural gas pipelines, and food. A human worker draws energy from all of these systems. An AI data centre draws exclusively from the fourteen percent. When you replace human cognitive work with machine cognitive work, you are not substituting one energy source for another. You are attempting to route a vastly larger share of economic activity through a grid that was not built to carry it.

The disclosed US data centre pipeline stands at over two hundred gigawatts[3], up more than one hundred and fifty percent year on year. Only a third is under active development. Almost half of planned builds this year are expected to be delayed or cancelled. The bottleneck is not capital and not demand. It is the physical execution layer.

Transformers are the most instructive case. Before 2020, a large power transformer arrived roughly two years after ordering. The combined pressure of AI construction, grid expansion, and industrial reshoring has pushed delivery times to five years in some cases, with prices up fifty percent. American manufacturing cannot meet domestic demand. The solution has been to import from China. US utilities imported more than eight thousand high-power transformers from China in the first ten months of last year, against fewer than fifteen hundred in the whole of 2022[4]. The country engaged in strategic competition with China over AI supremacy is dependent on Chinese supply chains to build the infrastructure that competition requires.

New large power transformer manufacturing capacity requires five to seven years from investment decision to first commercial shipment, gated by sequential constraints in specialised steel production, winding capacity, and testing infrastructure. American manufacturing cannot respond to demand at speed not for want of capital or will, but because the production bottlenecks cannot be resolved within the buildout window. This is not a pipeline risk or a forecast. It is the current state.

There is a further constraint that compounds the physical one. Regulatory frameworks designed to accelerate grid queue access, which allow interruptible loads to move to the front of the interconnection queue, are structurally unavailable to the workload class that most needs them. Agentic AI systems run continuously, maintaining state across multi-step tasks that cannot be paused without losing their entire context. The regulatory fast lane was designed for a load type that can be interrupted. The load type driving the most urgent infrastructure demand cannot.

The cost of natural gas, the primary fuel for new data centre power generation, has remained contained. The cost of electricity has not. The gap between the two is the cost of a transmission layer not designed for current loads. There is plenty of cheap gas. Turning it into cheap power at the point of consumption requires infrastructure that does not yet exist in sufficient quantity.

A displaced worker does not immediately stop drawing energy from the grid. They still heat their home, still charge their car, still consume electricity as before. Meanwhile the data centre that replaced their work adds a new industrial load. And electrification policies are simultaneously redirecting existing energy consumption onto the same constrained infrastructure. Three forces pressing on the same copper wires. The grid’s share of total energy must rise. Raising it requires rebuilding the transmission layer. The transmission layer is the binding constraint.

The compound problem

Capital is committed and spent long before a data centre generates revenue. Equipment is ordered, construction begins, depreciation clocks start, and debt is serviced, all on a timeline that precedes physical completion by years. When delivery slips, as the pipeline data suggests it is for nearly half of planned builds, the gap between cash out and cash in widens. The asset base meanwhile depreciates faster than the accounting schedules acknowledge. Chips used for frontier model training degrade in eighteen months, not the five to six years on official schedules[1]. The financial pressure accumulates in the gap between what the accounts show and what the physics dictates.

The electric vehicle industry is instructive. A genuinely important technology, rapid adoption, massive capital investment, and consistent failure to generate profits across most of the sector despite years of trying. The infrastructure was real. The demand was real. The economics were, and in many cases remain, elusive. AI need not follow exactly the same path. But the parallel is worth sitting with, particularly given that the AI infrastructure buildout is an order of magnitude larger than anything the electric vehicle industry attempted.

Where the value actually goes

The electricity parallel points toward a specific conclusion. When a transformative technology becomes universally accessible, the value does not accumulate in the wire. It accumulates in three places.

The first is the scarce physical inputs the wire requires. Copper is the transmission metal for the grid that AI demands. Uranium provides the baseload power that data centres need around the clock. Natural gas is the fastest deployable generation technology for the transition period, but the investment case is not in the commodity. It is in the pipeline infrastructure that moves it.

Gas pipeline operators with long-term contracted throughput agreements are a distinct sub-asset class from commodity gas exposure, and the distinction matters. The companies that matter here are not those selling gas at spot prices but those that have signed decade-long take-or-pay agreements with hyperscaler counterparties, committing to deliver a fixed volume regardless of what the market does, in exchange for a committed revenue stream that sits on the balance sheet like a bond. Regional gas price spreads in the corridors where data centre construction is most concentrated have expanded materially, the market’s way of saying the pipe is filling up before most of the demand has arrived.

Every data centre, regardless of who builds or operates it, must connect to every other data centre through optical fibre. Every optical transceiver, the hardware that converts electrical signals to light for long-distance transmission, requires indium phosphide as a substrate for its laser sources. Demand for these components has grown substantially faster than production capacity has expanded. China controls the majority of refined indium and introduced export controls in early 2025, creating a geopolitical supply constraint that cannot be engineered around quickly. This is a physics-limited bottleneck on the infrastructure that allows AI systems to communicate at scale, and it is almost entirely absent from public analysis of the buildout. Dark fibre routes in secondary hyperscaler markets, the owned rights-of-way through which this traffic flows continuously, represent a related asset class: independent of which model or platform wins the intelligence competition, the light must travel somewhere.

The pattern repeats at multiple points in the supply chain. Physics-limited upstream materials meeting exponentially growing demand produce the same structural dynamic at each layer. The constraint does not care which company’s logo is on the data centre.

The second is the broad economy rather than the technology sector itself. When AI access is universal, the businesses that benefit most are those in fragmented, relationship-dependent, or operationally complex sectors where embedding AI deeply restructures the cost base before competitors do the same. Industrials using AI to extract efficiency from physical operations. Financial services firms with proprietary data that becomes more valuable as generic intelligence becomes cheap. Healthcare, where data ownership creates durable advantage even when the underlying model is commoditised. The equal-weight index is the blunt instrument version of this thesis. It began outperforming the market-capitalisation-weighted index in 2025 and the direction reflects something real: earnings upgrades are migrating from the technology sector toward the rest of the economy. This is not a rotation driven by lower interest rates. It is a rotation driven by earnings.

The third is assets that hold their value regardless of which technology wins. Gold deserves specific attention here, and not for the conventional reason. Michael Green, a market strategist who has spent considerable time auditing the energy economics of the AI transition, identified something striking earlier this year. The historical relationship between gold and real interest rates has broken down. For decades when real rates rose, gold fell, because the opportunity cost of holding a non-yielding metal increased. Since 2022 that relationship has inverted. Gold and real rates are rising together. Green’s interpretation is that this is not an inflation signal in the traditional sense. It is a political signal. The transition from a human-led to a machine-led economy requires coordinated infrastructure investment at a scale that democratic political systems struggle to sustain. It requires telling voters that near-term disruption is the price of long-term gain, which is not a message that wins elections. Gold, in his reading, is rising because investors are beginning to price the possibility that the institutions responsible for managing this transition lack the political will to do it in an orderly way. It is a hedge against governance failure rather than against price level changes.

What to be cautious about is the shorter list. Software-as-a-service businesses whose moats rest on switching costs rather than proprietary data face a structural challenge that private market valuations have not yet fully absorbed. The large technology platforms are not uninvestable, but the direction of multiple compression across the class is a signal about what the market is beginning to understand about their cost structures. The specialist GPU cloud operators are the most exposed to a correction in the scarcity premium, because their revenue depends entirely on the incumbents continuing to need external capacity at current pricing, and Chinese efficiency work is shortening the window in which that condition holds.

The wire is not the business. It never was. The businesses built on cheap and universal electricity are where the twentieth century’s wealth was made. The equivalent businesses for the AI era are, in many cases, not yet visible in their mature form. They will not be found in the current composition of the major indices. They will be found in the sectors and geographies that a decade of capital flowing toward software has most thoroughly ignored.

The infrastructure investment cycle, if it corrects, will correct asymmetrically. The companies with the strongest balance sheets will absorb the adjustment. The sovereign funds have patience. The private credit vehicles have their own difficulties, but those are problems for sophisticated counterparties who accepted the terms knowingly.

The adjustment does not fall primarily on capital. It falls on the workforce that was promised reconstitution on the other side of the displacement. That workforce, its size, its composition, and the political consequences of its disappointment, is where this series is going next.

The infrastructure investment cycle, if it corrects, will correct asymmetrically. The companies with the strongest balance sheets will absorb the adjustment. The sovereign funds have patience. The private credit vehicles have their own difficulties, but those are problems for sophisticated counterparties who accepted the terms knowingly.

The adjustment does not fall primarily on capital. It falls on the workforce that was promised reconstitution on the other side of the displacement. That workforce, its size, its composition, and the political consequences of its disappointment, is where this series is going next.

Three names on the right side of the wire

The argument above identifies where value goes. That leaves the question of how to express it in a portfolio. The following three names are not the most famous companies in the AI infrastructure theme. They are the ones where the thesis is not yet fully in the price.

A systematic screen of 381 companies across US, European, and Asian exchanges scored each name on valuation, capital deployment, earnings quality, and fundamental stability, then applied a qualitative overlay for thesis-specific factors: the type of constraint each company controls, the duration and contractual structure of its revenue, its position in the supply chain, and its direct exposure to hyperscaler customers. The result is a ranked universe. The three names below sit at the intersection of strong thesis fit and reasonable valuation, a combination that is rarer than it sounds in a theme the market has already noticed.

Cheniere Energy (LNG): The largest liquefied natural gas exporter in the United States, operating approximately forty-five percent of the country’s LNG export capacity across its Sabine Pass and Corpus Christi terminals. Cheniere sits at the intersection of two constraints that cannot be resolved within the buildout window: gas infrastructure and LNG terminal capacity, both of which take five to seven years to construct. Roughly ninety percent of its capacity is contracted under long-term take-or-pay Sale and Purchase Agreements with counterparties including Shell and TotalEnergies. At 7.4x EV/EBITDA with a 75.8 percent operating margin, it is the cheapest and highest-quality infrastructure name in the screen. The business model is a toll road: fixed-capacity processing with contracted throughput, generating predictable cash flows regardless of commodity price direction. As domestic gas demand rises to feed data centre power generation, the same tightening in the US gas market that benefits pipeline operators flows directly through Cheniere’s Henry Hub-indexed contracts.

Mitsubishi Heavy Industries (MHVYF): The third member of the global gas turbine oligopoly, alongside GE Vernova and Siemens Energy. Only three companies in the world can manufacture the large-frame gas turbines that new data centre power plants require. All three have order books sold out through 2029 or 2030. The physics of turbine manufacturing, specialised alloys, precision casting, and multi-year qualification cycles, mean that no new entrant can resolve this constraint within the buildout window. GE Vernova, the most visible expression of this thesis, trades at 114.6x EV/EBITDA. Mitsubishi Heavy trades at 26.7x. The turbines are the same. The multiple is not. Mitsubishi Heavy is Tokyo-listed with a US ADR, which means thinner liquidity and yen currency exposure, both real costs. But a 77 percent discount to the pure-play peer for an identical physics-limited constraint is where systematic screening earns its keep, surfacing a name that qualitative research alone would not have found.

Siemens Energy (ENR.XETRA): The European member of the same turbine oligopoly, with the cheapest forward price-to-earnings ratio of the three at 41.3x. Siemens Energy has been weighed down by losses in its Siemens Gamesa wind division, an overhang that has kept the gas turbine story discounted relative to GE Vernova. Earnings growth of 240 percent reflects the turnaround inflecting. If Gamesa stabilises as guided, the gas turbine earnings become the dominant driver and the stock re-rates. The risk is known. So is the discount it creates.

The names that were excluded matter as much as the names that were included. GE Vernova is the purest expression of the turbine thesis but at 114.6x EV/EBITDA the market has priced the next five years of earnings growth and then some. Williams Companies controls the irreplaceable Transco pipeline corridor and has a twenty-year take-or-pay deal with Meta, but at 33x price-to-earnings it trades at more than double Energy Transfer’s multiple for a comparable asset type. Coherent, the named NVIDIA supplier for co-packaged optics, sits at 56x EV/EBITDA with a binary outcome: if the CPO transition accelerates, the price is justified, and if it stalls, there is a long way down. In each case, the thesis is correct. The valuation is the problem.

The honest framing is this: the AI infrastructure thesis is not a variant perception. It is consensus. Every sell-side firm has published a version of it. The edge, if there is one, is not in identifying the theme but in identifying which names express it at valuations where the risk and reward still favour the buyer. A turbine is a turbine whether it carries a GE logo or a Mitsubishi logo. The physics are identical. The multiples are not. That gap is the opportunity, and it will not persist indefinitely.

As always, a “like” or the “sharing” of this article means the world to me !

References

1] Paul Kedrosky and Derek Thompson, “Yes, AI Is a Bubble. There Is No Question,” Plain English podcast, March 18, 2026. The rational bubble argument, the railroad parallel, the compressive versus expansive token distinction, and the chip depreciation analysis are drawn from this conversation.

[2] Michael W. Green, “The Thermodynamic Margin Call,” yesigiveafig.com, January 18, 2026. The fourteen percent grid bottleneck, the Triple Pincer, and the gold and real rates analysis are drawn from this essay.

[3] Sightline Climate, cited in Bloomberg News, 2026. The disclosed data centre pipeline figures and the active development and cancellation data are drawn from this source.

[4] Wood Mackenzie, cited in Bloomberg News, 2026. The transformer import volume data comparing 2022 and 2025 figures are drawn from this source.