The Noise Economy

Stray Narratives, Issue 06

Before we get to the infrastructure question I promised at the end of the last piece, there is an observation about knowledge work that I want to set down first, because it changes the scale of what we are measuring when we eventually get to the economics. There is a distinction that the automation debate has largely failed to make, and I want to make it here because I think it changes what we should be looking for.

The standard argument runs as follows. AI performs tasks that humans previously performed. The human is no longer required to perform those tasks. The human is therefore displaced. This is mechanically correct and, in many sectors, already observable. It is not, however, the whole picture.

There is a second category of effect that is analytically distinct, and in some respects more consequential. AI does not automate the task. It makes the task unnecessary. The task disappears not because a machine now does it, but because the problem it was solving ceases to exist. There is no displacement because there is nothing left to displace.

I have been calling this friction elimination, which is a somewhat dry formulation for something that has fairly dramatic economic implications. Bear with me.

Consider contract law, or more precisely, the interpretation work that contracts generate. Contracts are not written to be clear. They are written, in many cases, to create productive ambiguity, the kind that requires expert navigation every time a material question arises. Legal complexity of this variety is not accidental. It is structural. It sustains an entire layer of professional activity.

AI that simplifies the drafting of contracts, and that makes their meaning unambiguous at the point of execution, does not automate the contract reviewer. It removes the conditions under which contract review becomes necessary. The reviewer is not replaced. The reviewer’s function is dissolved.

A meaningful share of what financial intermediaries do, and I say this as a thirty-year veteran of a sector that has always charged handsomely for guiding clients through a landscape it had some interest in keeping unmapped, exists not because they create value in the transaction itself but because without them the buyer and the seller cannot find each other, cannot assess creditworthiness, or cannot navigate a regulatory architecture that was not designed with clarity as its primary objective. AI that connects counterparties directly, that renders creditworthiness legible in real time, and that translates regulatory requirements into plain operational instruction, does not automate the intermediary. It publishes the map.

Consulting produces a version of the same effect. A substantial share of consulting revenue, perhaps the majority in some practice areas, is not the cost of strategic advice. It is the cost of organisational opacity. Two institutions that cannot speak each other’s language hire a translator. AI that makes data legible across institutional boundaries, that renders one organisation’s reporting comprehensible to another without human mediation, does not automate the consultant. It removes the opacity that made the consultant necessary.

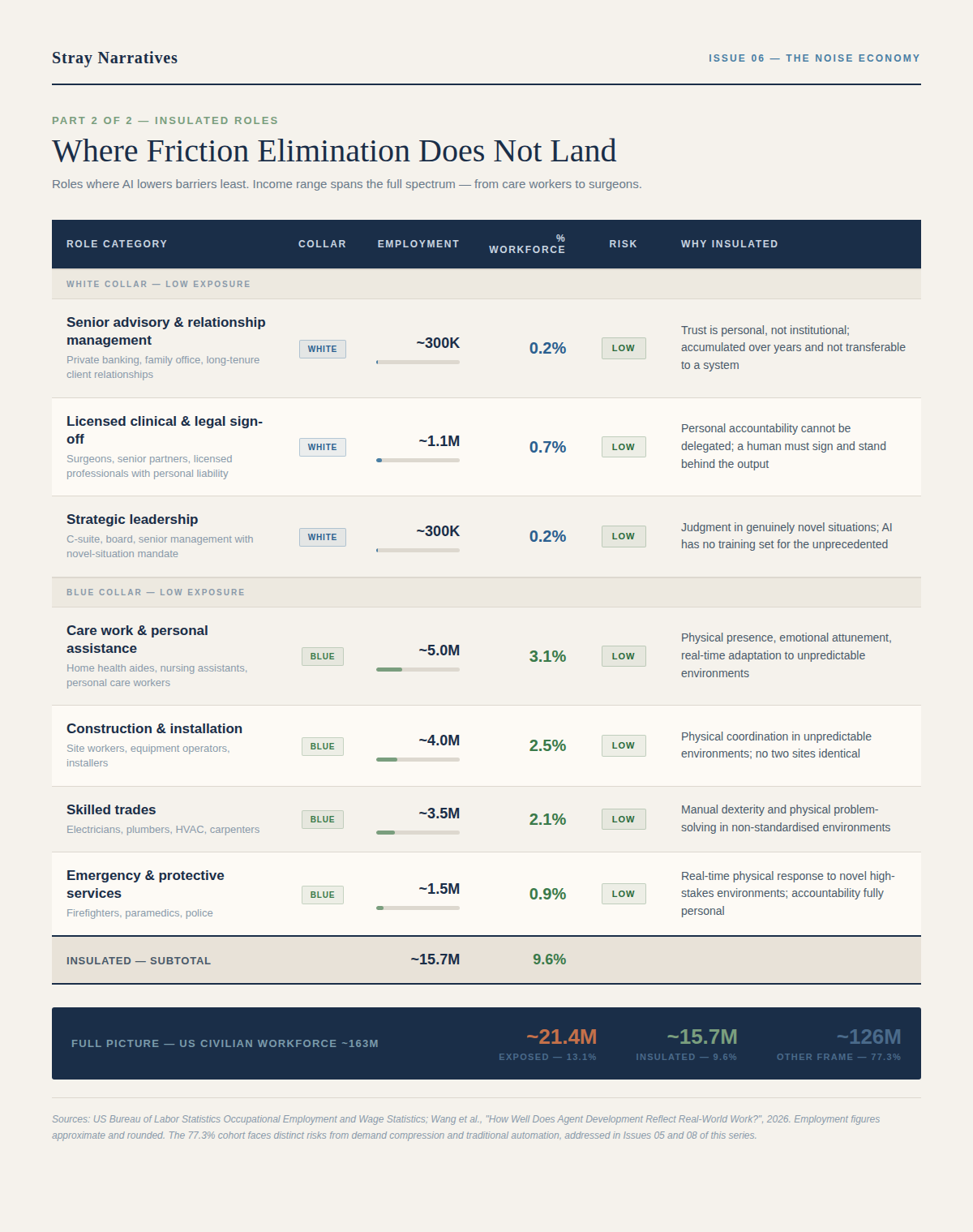

A research paper published earlier this year mapped the distribution of AI agent development across more than a thousand United States occupations, examining which roles are being actively targeted for AI application and which are not. [1] The concentration is striking, and it has direct bearing on where friction elimination is already arriving.

Three numbers in that table deserve attention before moving on.

The first is 13.1 percent. That is the share of the US civilian workforce sitting in roles with high or moderate friction elimination exposure, approximately 21 million workers, once office and administrative support is included alongside the professional categories most commentators focus on. Administrative support alone accounts for 16 million of those workers, which is the figure the automation debate most consistently underestimates. Scheduling, coordination, document management, internal reporting: these are not glamorous categories, but they are large ones, and they are already compressing.

The second number is 77 percent. That is the share of the workforce that sits outside the friction elimination frame entirely. Food service, retail, manufacturing, transport, healthcare support, government, education: these are primarily physical, site-based, or institutionally regulated roles, and AI’s primary mode of attack on them is not friction elimination but demand compression and traditional automation. Those are distinct risks with distinct timelines and distinct policy implications, addressed elsewhere in this series. [2] The point here is simply that friction elimination is not a universal story. It is a concentrated one.

The third number is the one the table does not show directly, but which follows from the first two. The 13.1 percent of workers facing friction elimination are not distributed evenly across the income spectrum. They are concentrated in the upper-middle income range, in the graduate-entry professional and administrative cohort that fills the office towers of every major city and whose spending sustains the restaurants, retailers, and service businesses around them. When that cohort contracts, the contraction does not stay contained. It radiates outward into the 77 percent.

The insulated categories, for the record, span a wider income range than is commonly assumed. At one end sit care workers and skilled tradespeople, whose protection comes from physical presence and manual dexterity. At the other end sit surgeons, senior lawyers, and relationship bankers, whose protection comes from licensed personal accountability and years of accumulated trust that is personal rather than institutional. The reader who recognises their own profession in that latter group may feel a degree of reassurance at this point.

There is an economic consequence here that is distinct from the wage suppression argument I made in the last issue, and I want to be precise about the distinction.

That piece described what happens to the price of genuine cognitive work when AI compresses the supply of cognitive capacity. Wages fall because the labour market adjusts to a new supply curve. The work remains. It is worth less.

The friction elimination dynamic is different. When AI compresses friction, the activity does not get automated and measured as machine output. It disappears from the economy entirely. GDP falls not because productivity declines, but because a large category of activity that was counted as output is revealed to have been the cost of navigating a world that was more opaque than it needed to be. Remove the opacity, and you remove the revenue stream that the opacity generated.

This is deflationary in a way that does not show up cleanly in productivity statistics. Productivity measures output per unit of input. If the output disappears because it was never genuinely necessary, the productivity framework does not capture what happened. The economy simply contracts in that sector, without any corresponding machine taking over the function. There is no robot to count. There is no AI system generating measurable output in the space where the human used to work. The space closes.

In Issue 02, I introduced the reconstitution argument and noted immediately that the sequencing was already unfavorable: demand-side compression would arrive at consumer speed, while new contested work would reconstitute, if it reconstituted at all, at institutional speed. The gap between the two was the central problem, not the long-run destination. [2]

The friction elimination argument makes that sequencing problem harder still. The reconstitution scenario assumed a stable base of genuine economic activity from which recomposition would occur. It assumed, in other words, that the economy being left behind after automation was real. The friction elimination argument suggests that some portion of what we have been counting as economic output was the cost of opacity rather than the creation of value. The floor from which reconstitution proceeds is lower than even the cautious sequencing scenario required.

How much lower is a question I will return to. The physical infrastructure constraints on AI deployment that I raised at the close of the last piece, and which the next issue addresses in full, will determine the pace at which friction elimination actually propagates. The theoretical case is clear. The timing depends on the wire.

If you found this useful, a like takes three seconds and helps considerably more than that. If you know someone who ought to be reading this, a share would be much appreciated.

[1] Wang et al., “How Well Does Agent Development Reflect Real-World Work?”, 2026. The distribution of AI agent development across United States occupations, and the concentration of benchmarking activity in computer and mathematical work relative to the domains of largest employment, are drawn from this paper. Employment figures in the accompanying tables draw additionally on US Bureau of Labor Statistics Occupational Employment and Wage Statistics data.

[2] Stray Narratives, Issues 01, 02, 04, and 05: “The Sorting Machine,” “The Adoption Asymmetry,” “The Channel Controllers,” and “When Correlations Move to One.” The Verification-Substitution Matrix, the reconstitution and sequencing argument, and the wage suppression transmission argument are developed in those issues.